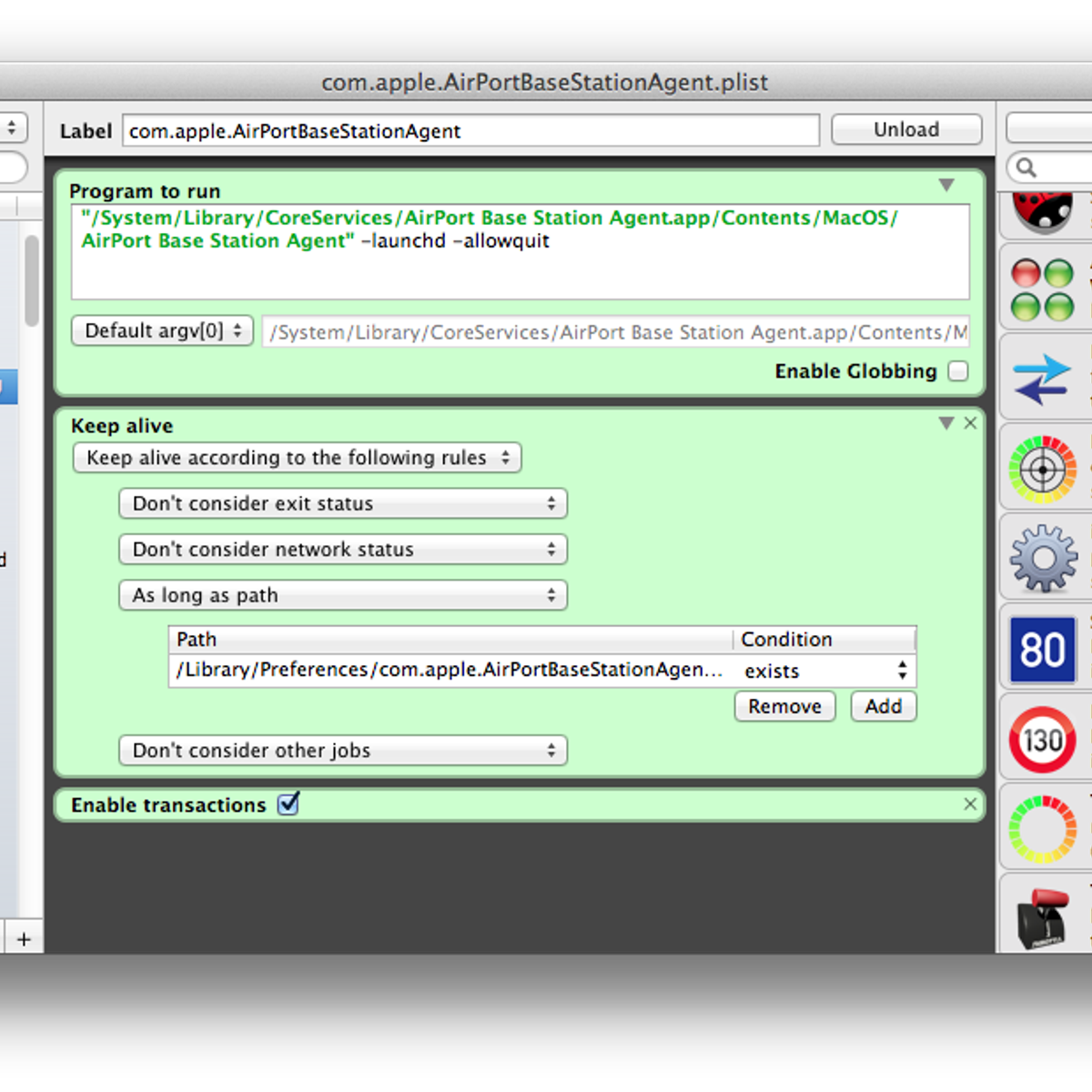

Create Jira issues from templates easily. Restore Epic with all its children issues and sub-tasks. Jira Server 7.2.0 - 8.13.0 and Jira Data Center 7.2.0 - 8.13.0. AppLiger supports this app. (50 users) and Jira Service Desk (10 agents) on the same instance, you pay the 50-user price for apps.Note: While this app has features. Empire is a fully responsive Joomla! 3.7+ template mostly for real estate, agencies, builders and other related businesses.This template can be used in marketing and sales activities of real estate projects, new developments, residentials, vacation and rental properties and much more. Plisterine is an application that will help you easily create launch agents. What are launch agents, you ask? Launch agents are magic little files with which you can automate the launching/running of. Plisterine 2.0.2 – Easily create launch agents. Launch the Local Security Policy. If Oracle Management Agents 13c (13.2.0.x) hang frequently or do not respond on Solaris 10ux operating systems, then refer to.

- Plisterine 2 0 2 – Easily Create Launch Agents Using

- Plisterine 2 0 2 – Easily Create Launch Agents Free

- Plisterine 2 0 2 – Easily Create Launch Agents Of Shield

- Plisterine 2 0 2 – Easily Create Launch Agents Without

Posted 27 March, 2017 by screamingfrog in Screaming Frog Log File Analyser

Screaming Frog Log File Analyser Update – Version 2.0

https://mvhaj.over-blog.com/2021/01/mac-os-10-6-7-free-download.html. I am delighted to announce the release of the Screaming Frog Log File Analyser 2.0, codenamed ‘Snoop'.

Since launch last year, we've released eight smaller updates to the Log File Analyser, but this is a much larger and more significant update, with some very exciting new and unique features.

Let's take a look at what's new in version 2.0 of the Log File Analyser.

1) Search Engine Bot Verification

You can now automatically verify search engine bots, either when uploading a log file or retrospectively after you have uploaded log files to a project.

Search engine bots are often spoofed by other bots or crawlers, including our own SEO Spider software when emulating requests from specific search engine user-agents. Hence, when analysing logs, it's important to know which events are genuine, and those that can be discounted.

The Log File Analyser will verify all major search engine bots according to their individual guidelines. For example, for Googlebot verification, the Log File Analyser will perform a reverse DNS lookup, verify the matching domain name and then run a forward DNS using the host command to verify it's the same original requesting IP.

After validation, you can use the verification status filter, to view log events that are verified, spoofed or if there are any errors in verification. Hot scatter slot.

2) Directories Tab With Aggregated Log Events

There's a new ‘directories' tab, which aggregates log event data by path. If a site has an intuitive URL structure, it's really easy to see exactly where the search engines are spending most of their time crawling, whether it's /services/ pages, or the /blog/ etc. This view also aggregates errors and response codes ‘buckets'.

This aggregated view makes it very easy to identify sections of crawl waste or those that might need attention due to lack of crawl activity. The ‘directories' tab can be viewed in a traditional ‘list' format as well as the directory tree view.

3) Directory Tree View

The directory tree view seen in the new ‘directories' tab is now also available within other tabs, such as ‘URLs' and ‘Response Codes', too.

There's a similar feature in our SEO Spider software, and it can often make visualising a site's structure easier than traditional list view.

4) User-Agents, IPs & Referers Tabs

You can now view aggregated log event data around each of these attributes, including number of events, unique URLs requested, bytes, response times, errors and more.

Analysing log event data aggregated around specific user-agents allows you to gain insights into crawl frequency, spread and errors encountered.

Principle 2 1 3. In an ‘all log event' project, you can view every user-agent in a log file, including browsers, various other bots across the web, alongside search engine bots.

The IPs tab allows you to view the most common IPs from which the search engine bots are making requests –

Football manager 2019 editor. Or perhaps you want to see specific IPs that have been spoofing search engines to crawl your site (before blocking them!).

5) Amazon Elastic Load Balancing Support

Since launch last year, we've released eight smaller updates to the Log File Analyser, but this is a much larger and more significant update, with some very exciting new and unique features.

Let's take a look at what's new in version 2.0 of the Log File Analyser.

1) Search Engine Bot Verification

You can now automatically verify search engine bots, either when uploading a log file or retrospectively after you have uploaded log files to a project.

Search engine bots are often spoofed by other bots or crawlers, including our own SEO Spider software when emulating requests from specific search engine user-agents. Hence, when analysing logs, it's important to know which events are genuine, and those that can be discounted.

The Log File Analyser will verify all major search engine bots according to their individual guidelines. For example, for Googlebot verification, the Log File Analyser will perform a reverse DNS lookup, verify the matching domain name and then run a forward DNS using the host command to verify it's the same original requesting IP.

After validation, you can use the verification status filter, to view log events that are verified, spoofed or if there are any errors in verification. Hot scatter slot.

2) Directories Tab With Aggregated Log Events

There's a new ‘directories' tab, which aggregates log event data by path. If a site has an intuitive URL structure, it's really easy to see exactly where the search engines are spending most of their time crawling, whether it's /services/ pages, or the /blog/ etc. This view also aggregates errors and response codes ‘buckets'.

This aggregated view makes it very easy to identify sections of crawl waste or those that might need attention due to lack of crawl activity. The ‘directories' tab can be viewed in a traditional ‘list' format as well as the directory tree view.

3) Directory Tree View

The directory tree view seen in the new ‘directories' tab is now also available within other tabs, such as ‘URLs' and ‘Response Codes', too.

There's a similar feature in our SEO Spider software, and it can often make visualising a site's structure easier than traditional list view.

4) User-Agents, IPs & Referers Tabs

You can now view aggregated log event data around each of these attributes, including number of events, unique URLs requested, bytes, response times, errors and more.

Analysing log event data aggregated around specific user-agents allows you to gain insights into crawl frequency, spread and errors encountered.

Principle 2 1 3. In an ‘all log event' project, you can view every user-agent in a log file, including browsers, various other bots across the web, alongside search engine bots.

The IPs tab allows you to view the most common IPs from which the search engine bots are making requests –

Football manager 2019 editor. Or perhaps you want to see specific IPs that have been spoofing search engines to crawl your site (before blocking them!).

5) Amazon Elastic Load Balancing Support

We've had a lot of requests for this feature. The Log File Analyser now supports the specific AWS Elastic Load Balancing log file format.

This means you can simply drag and drop the raw access logs from Amazon Elastic Load Balancing servers straight into the Log File Analyser, and it will do the rest.

Other Updates

We have also included some other smaller updates and bug fixes in version 2.0 of the Screaming Frog Log File Analyser 2.0, which include the following –

- An ‘All Googlebots' filter to make it easier to view all Googlebot crawlers at once.

- Users do not need to install Java for the application independently anymore.

- RAR file support.

- Bz2 file support.

Plisterine 2 0 2 – Easily Create Launch Agents Using

We hope you like the update! Please do let us know if you experience any problems, or discover any bugs at all.

Thanks to the SEO community as usual, for all the feedback and suggestions for improving the Log File Analyser – there's plenty more to come. Now go and download version 2.0 of the Log File Analyser!

Plisterine 2 0 2 – Easily Create Launch Agents Free

Small Update – Version 2.1 Released 12th July 2017

We have just released a small update to version 2.1 of the Screaming Frog Log File Analyser. This release includes mainly bug fixes. Simpleedit 1 600. The full list includes –

Plisterine 2 0 2 – Easily Create Launch Agents Of Shield

- Add support for custom W3C field for deriving protocol from Server Variable HTTPS.

- Speed up duplicate event checking.

- Don't display Average Response time with decimal places.

- Fix crash when importing invalid W3C log file.

- Fix crash when importing an empty zip file.

- Fix bot verification during import to use correct IP.

- Don't fail entire import when a zip file won't uncompress.

- Fix Event tab in lower window of User Agent master tab to filter on selected UA.

- Fix crash when exporting from the Directories tab.

- Fix over over numbers not updating after URL Data import.

- Fix broken search in details tabs.

- Fix crash on sort.